Weekly App Install: RSSHub

Heya, it's been a minute so let's do another one of these. As a refresher I grab a random repo project that looks interesting and try to get it running on Reclaim Cloud. Today's project:

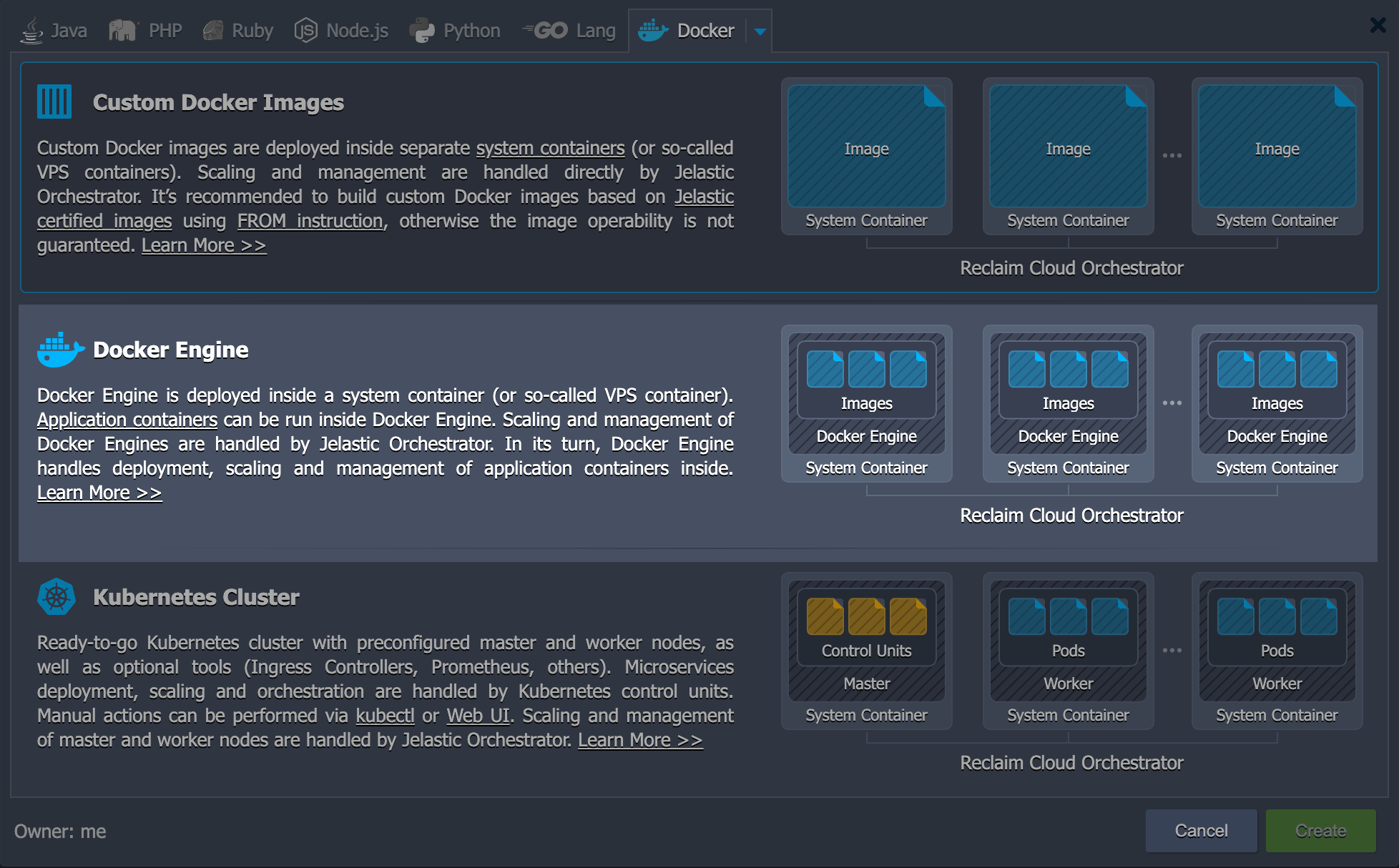

Feed aggregator (is it a reader? It says it can "generate an RSS feed from pretty much anything" Let's see). A link in the README to deploy options shows me we can use Docker Compose so I'll do that.

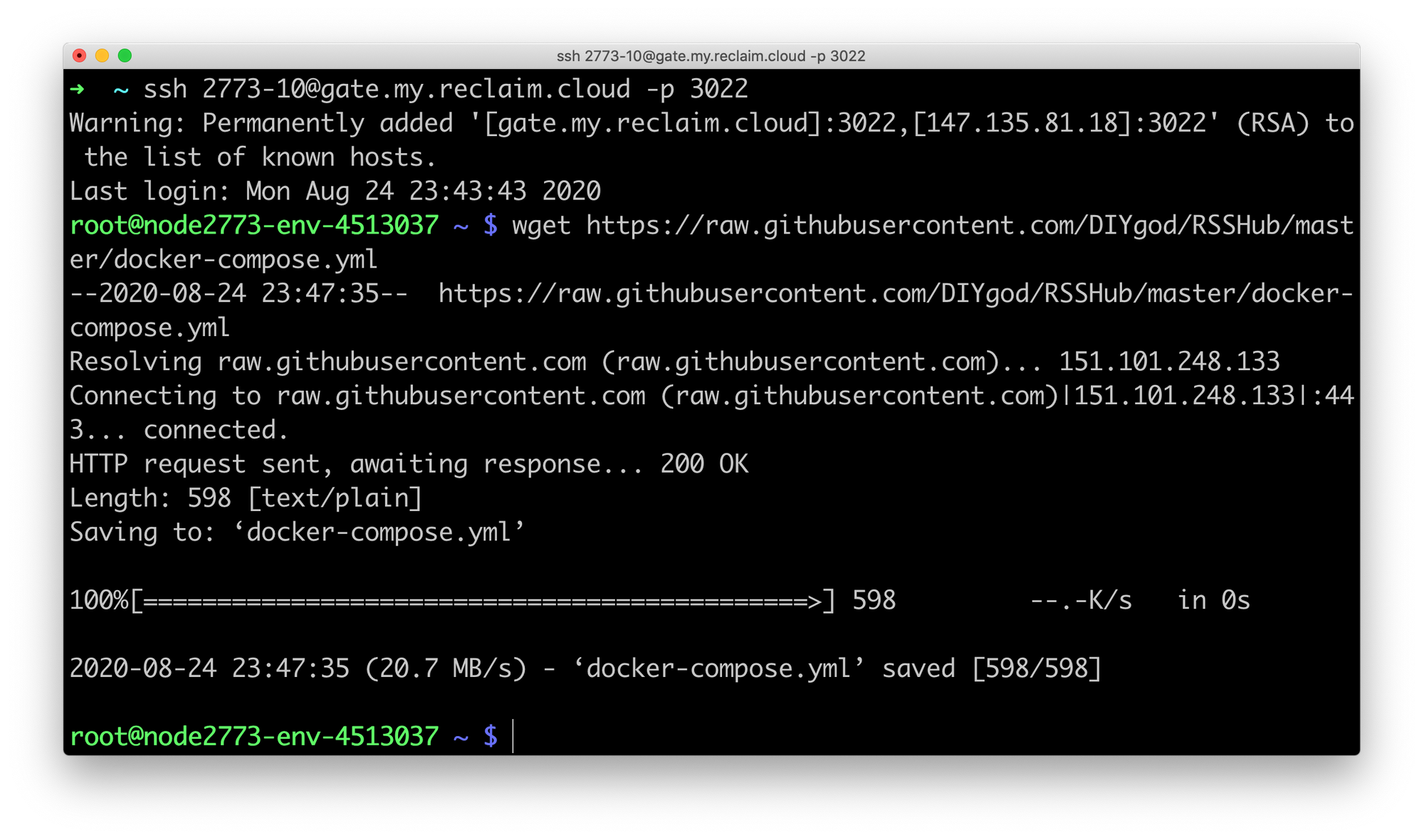

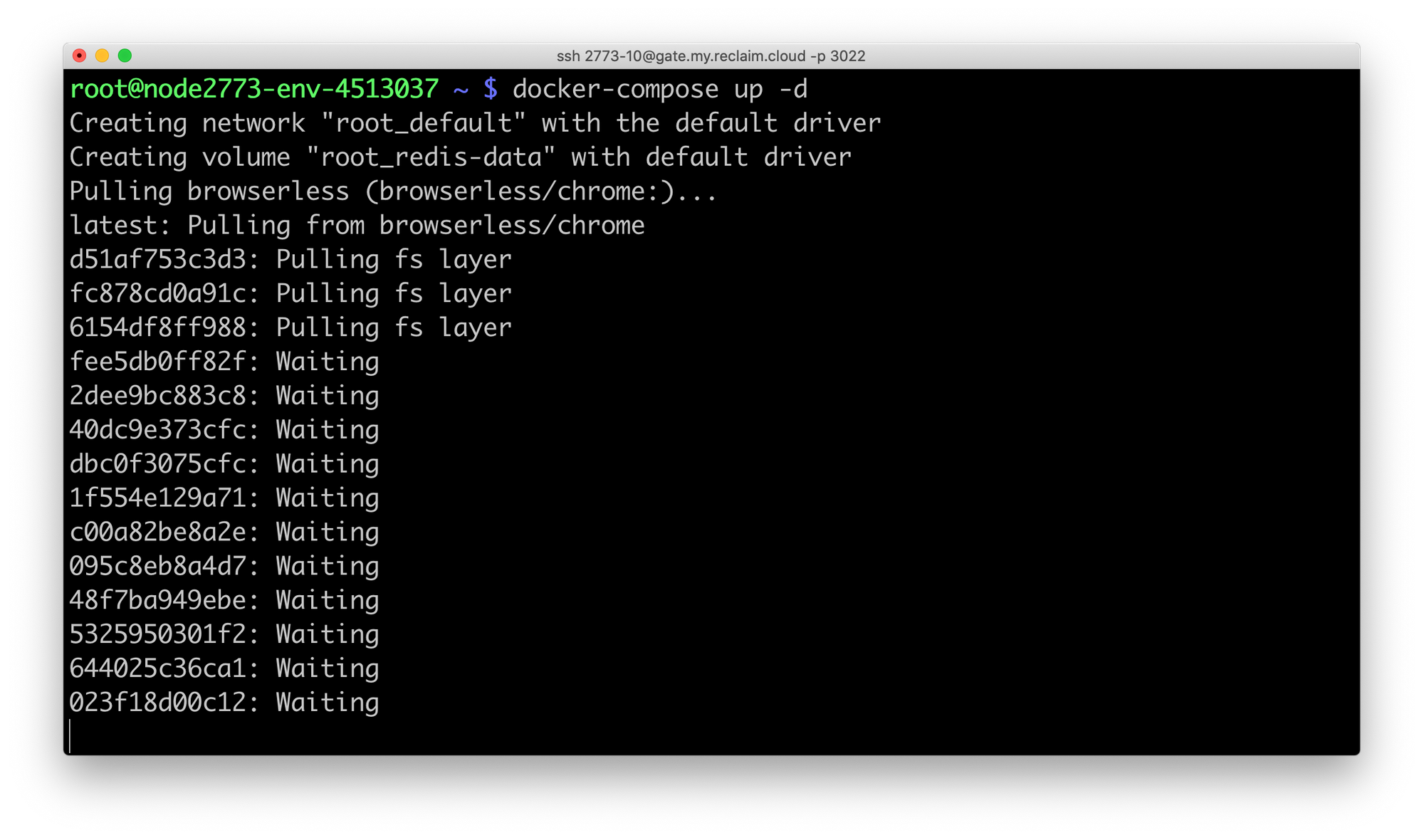

Now I'll use SSH to get things up and running. According to the guide it's just a few commands.

wget https://raw.githubusercontent.com/DIYgod/RSSHub/master/docker-compose.yml

docker volume create redis-data

docker-compose up -d

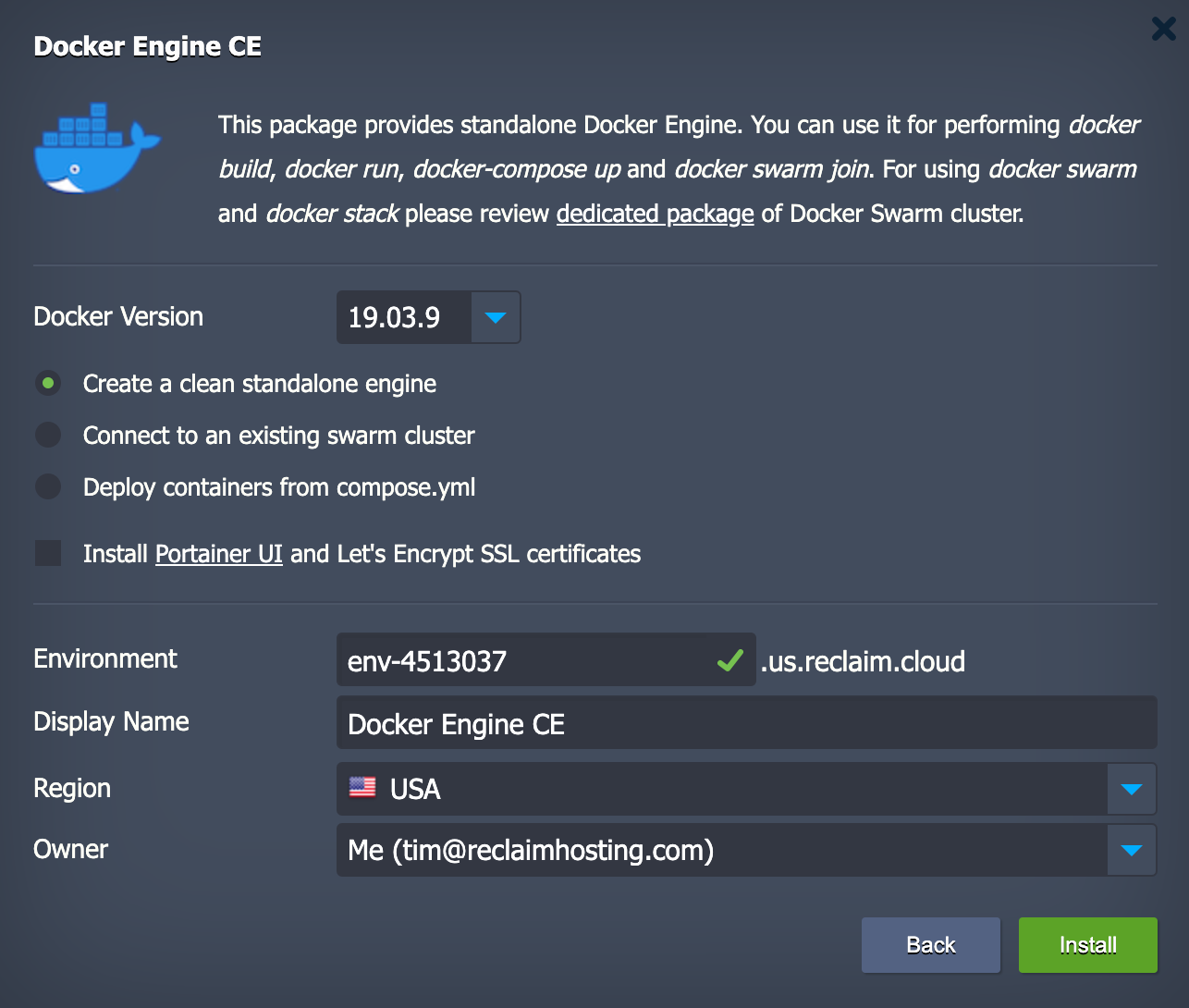

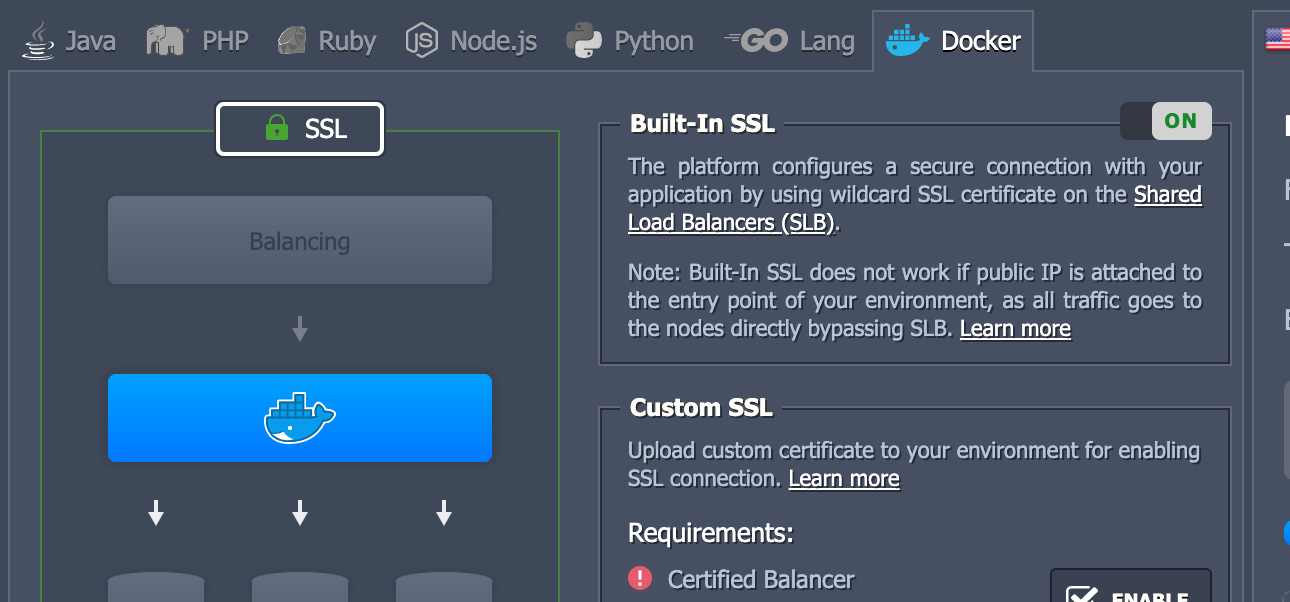

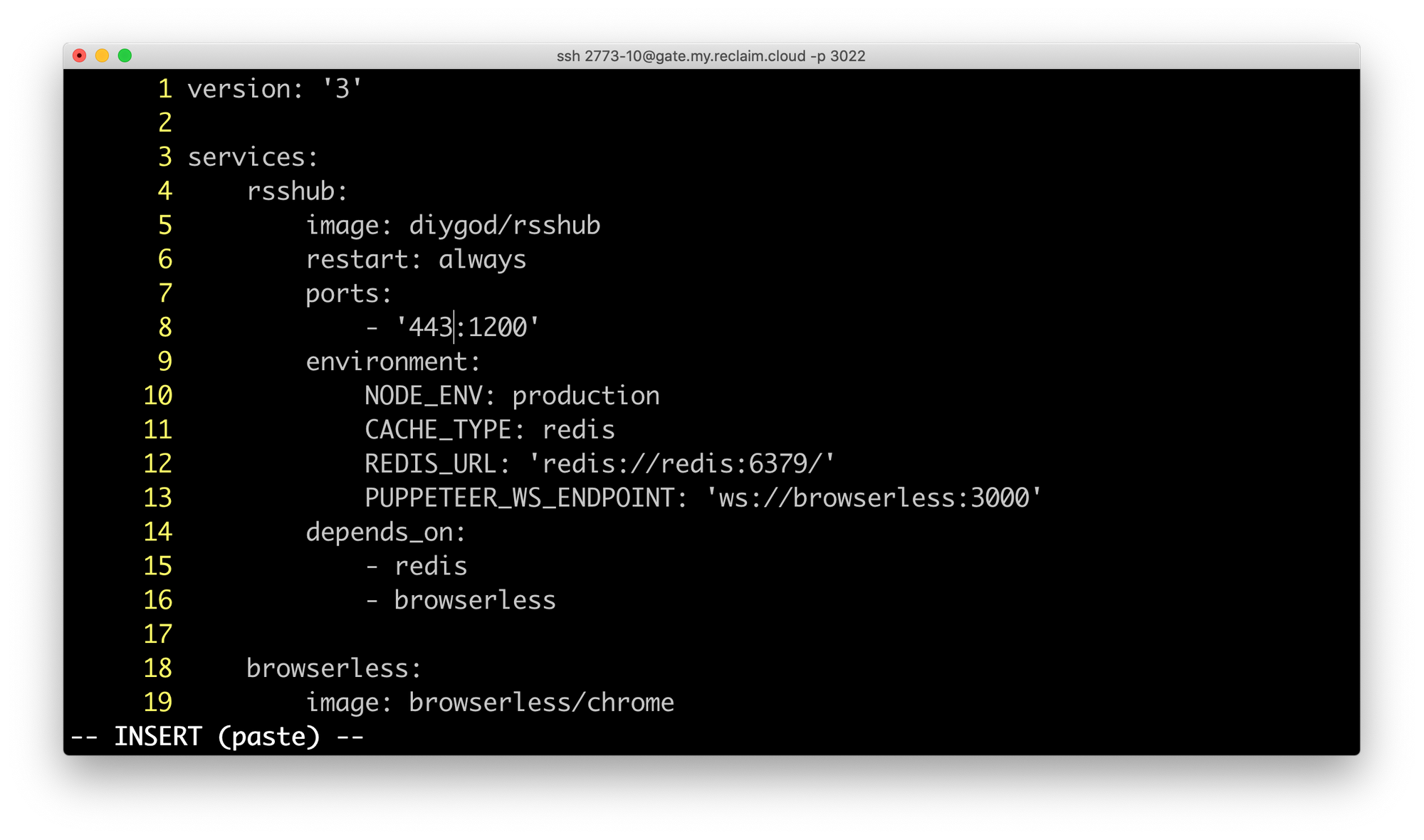

It does mention we can also edit our environment in the docker-compose.yml file. Looking at that the main thing I think I would want is to have it load over port 443 so that I don't have to put port numbers in the browser. Which reminds me let's turn SSL on.

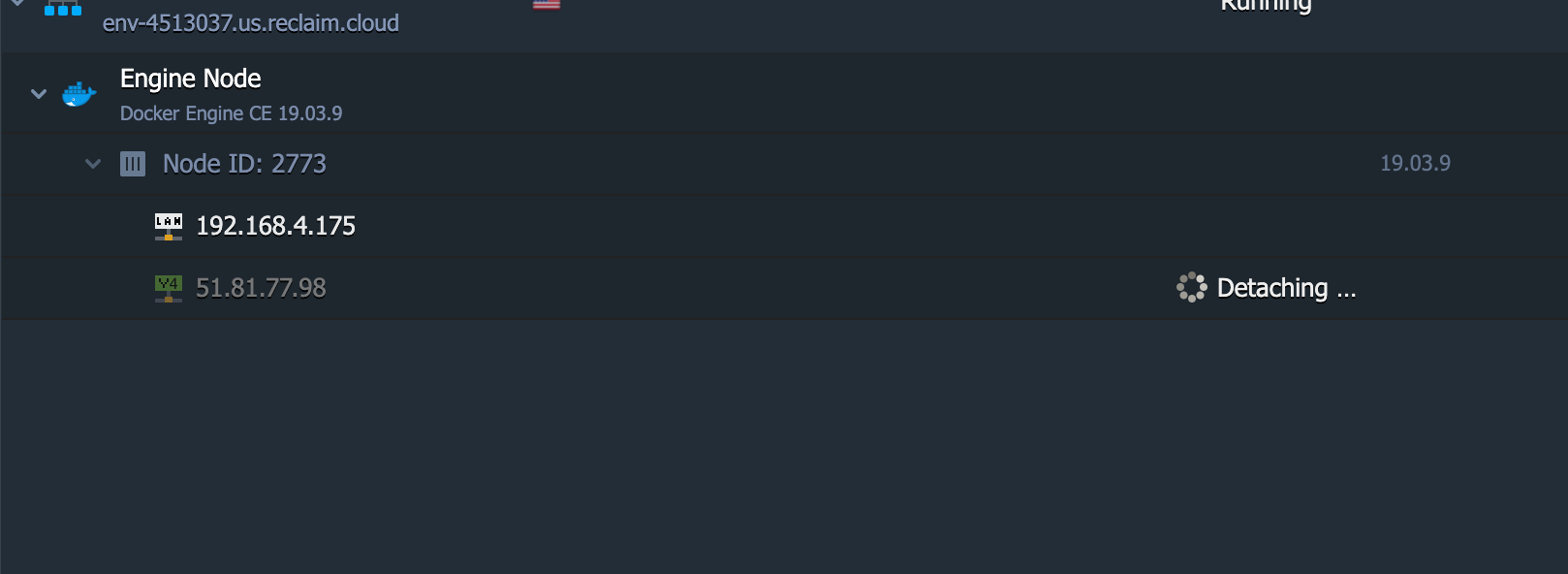

I'm also going to remove this IP address it assigned because I don't think I'll need it and shared SSL through the load balancer works better without a dedicated IP (so it can just proxy traffic to a few different ports, see here).

I'm going to make one edit to the docker-compose.yml file to assign port 443 externally to communicate with 1200 internally.

Now I create the redis volume for persistent data storage and run the docker-compose up -d command and we're off to the races. Cross your fingers!

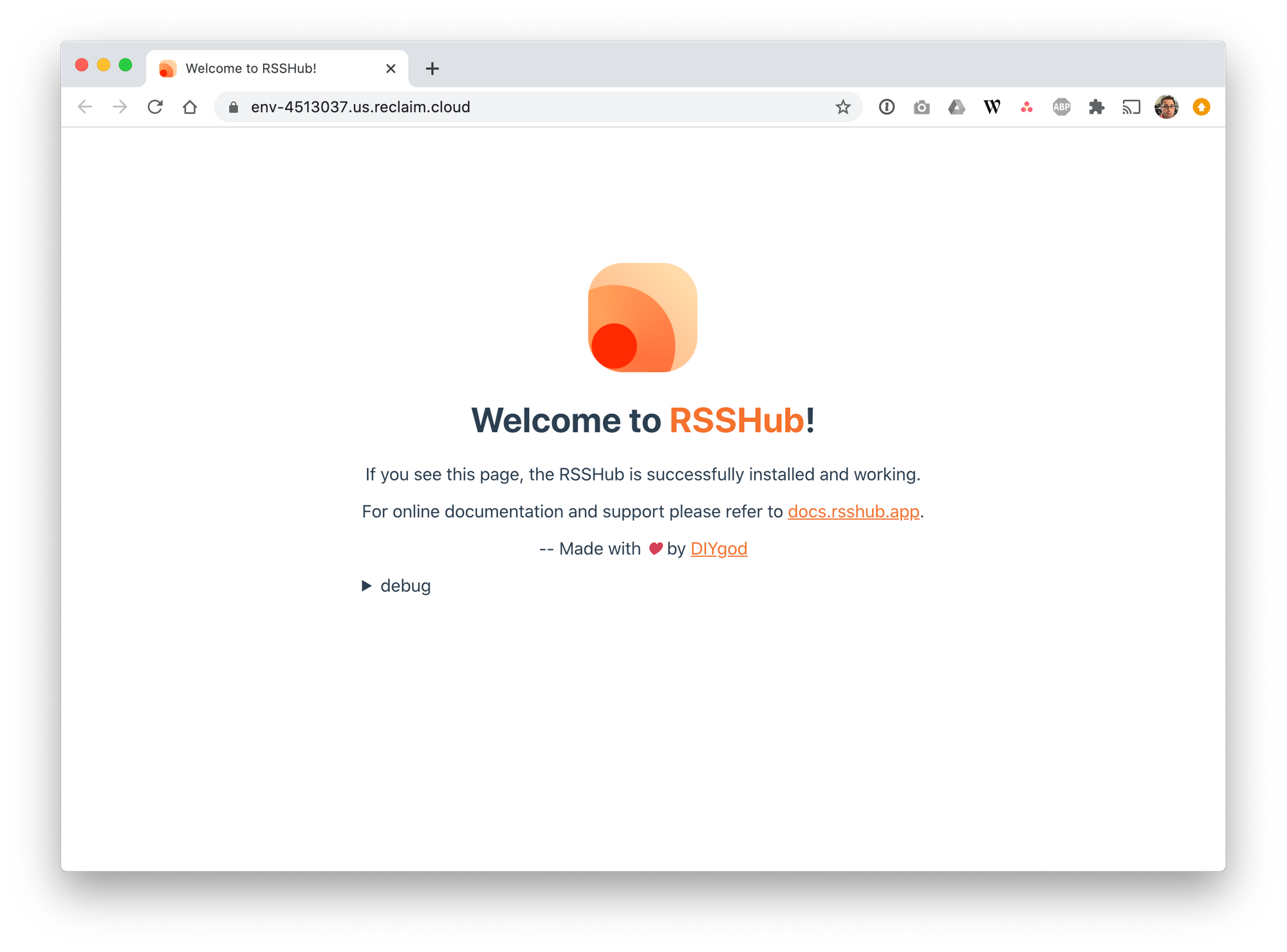

A few minutes later that finishes up with no apparent errors. If this works I should just be able to load my environment URL over https and see something.

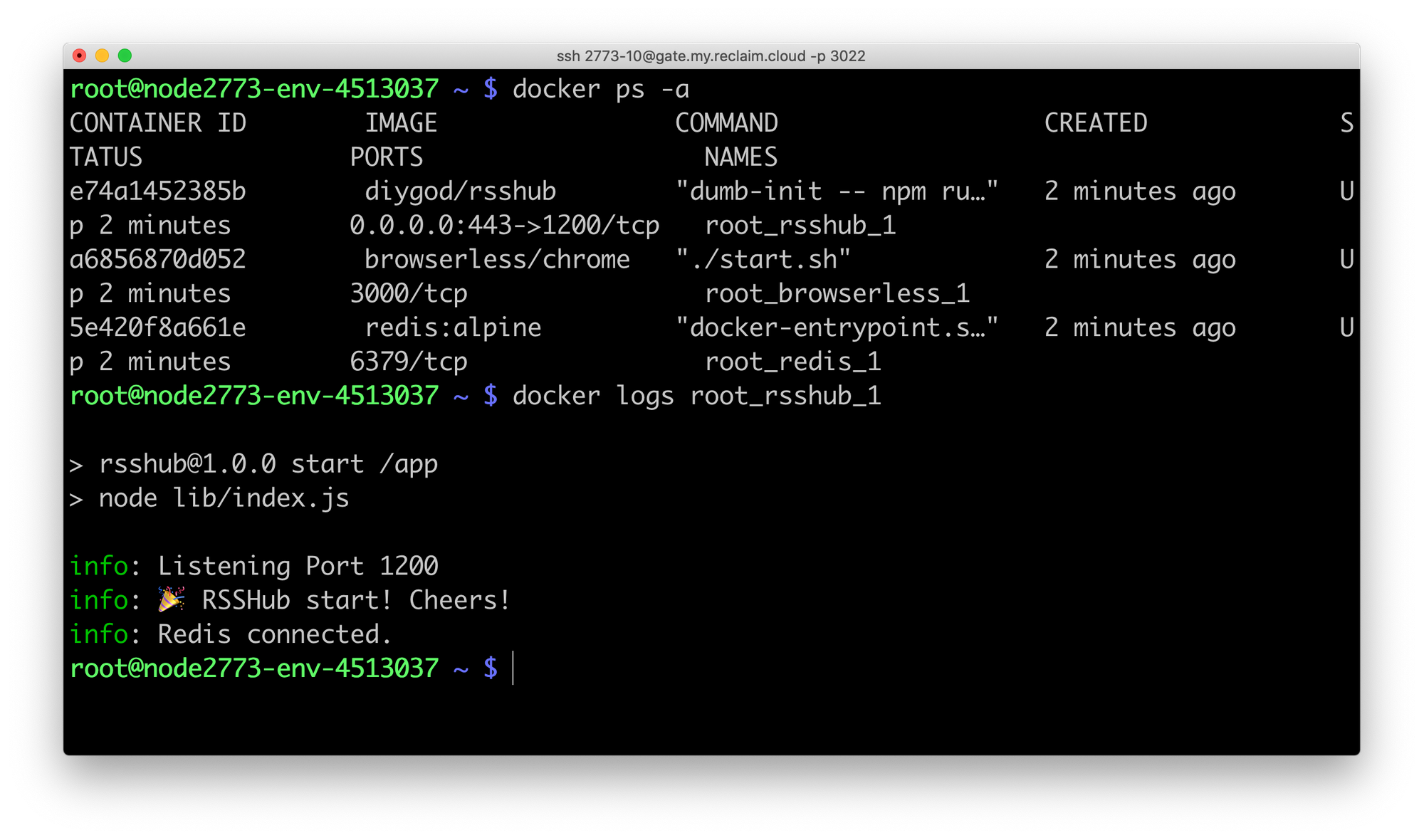

So, it's not showing me anything. But if I run docker ps -a I see the containers running and I can run docker logs root_rsshub_1 and see the app started too.

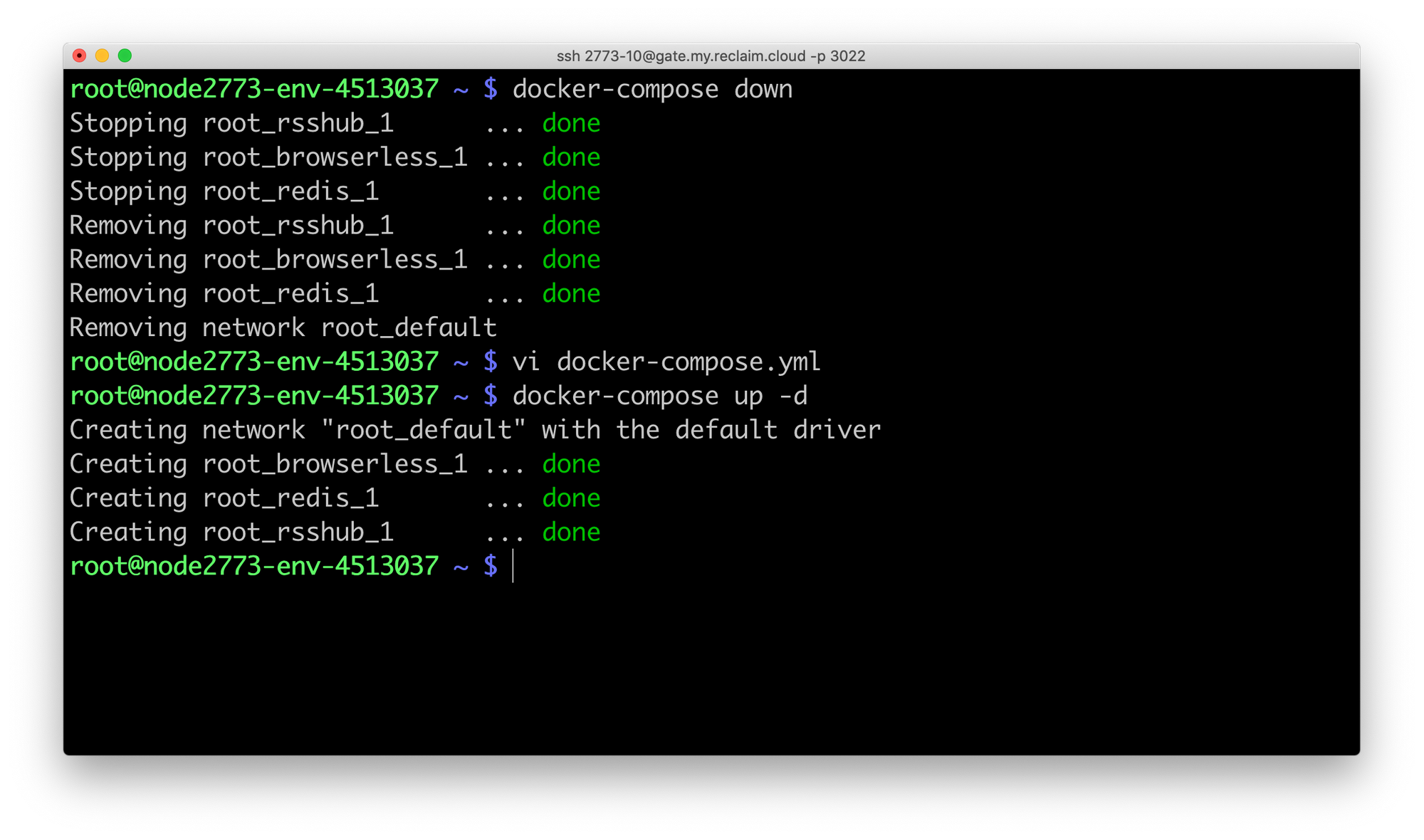

Feels like we're close. I'm wondering at this point if I should have mapped port 80 instead of 443 to avoid any issues with SSL certs. I know the shared load balancer will handle passing SSL through to port 80. So let's docker-compose down and edit our file again to change it to 80:1200 and start things back up.

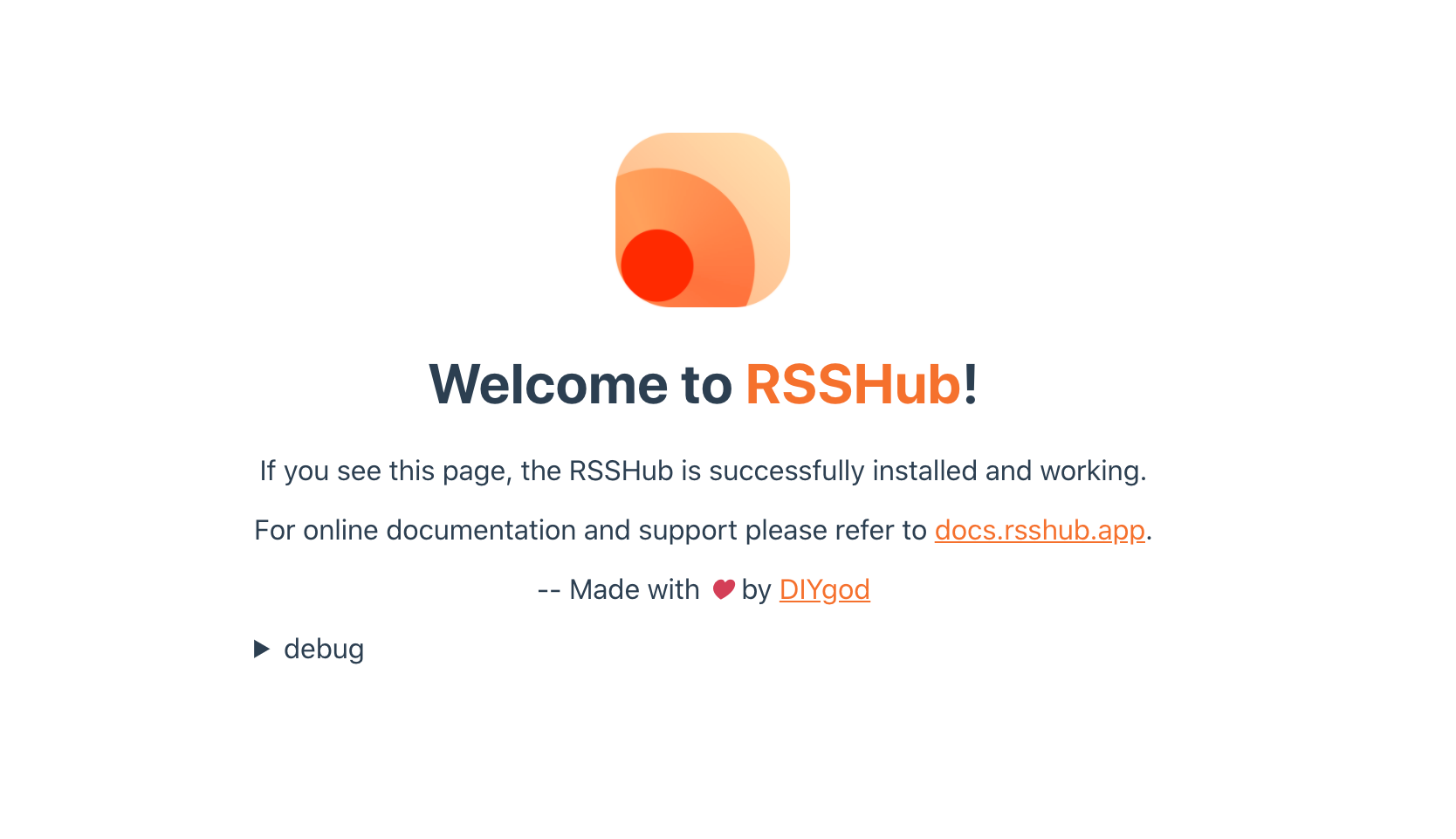

And....

Now we're talking! So it's online.....now what? Crap, I have to read the docs some more? Ok I'll click that link.

Errrr, what? Ah, let's switch to English.

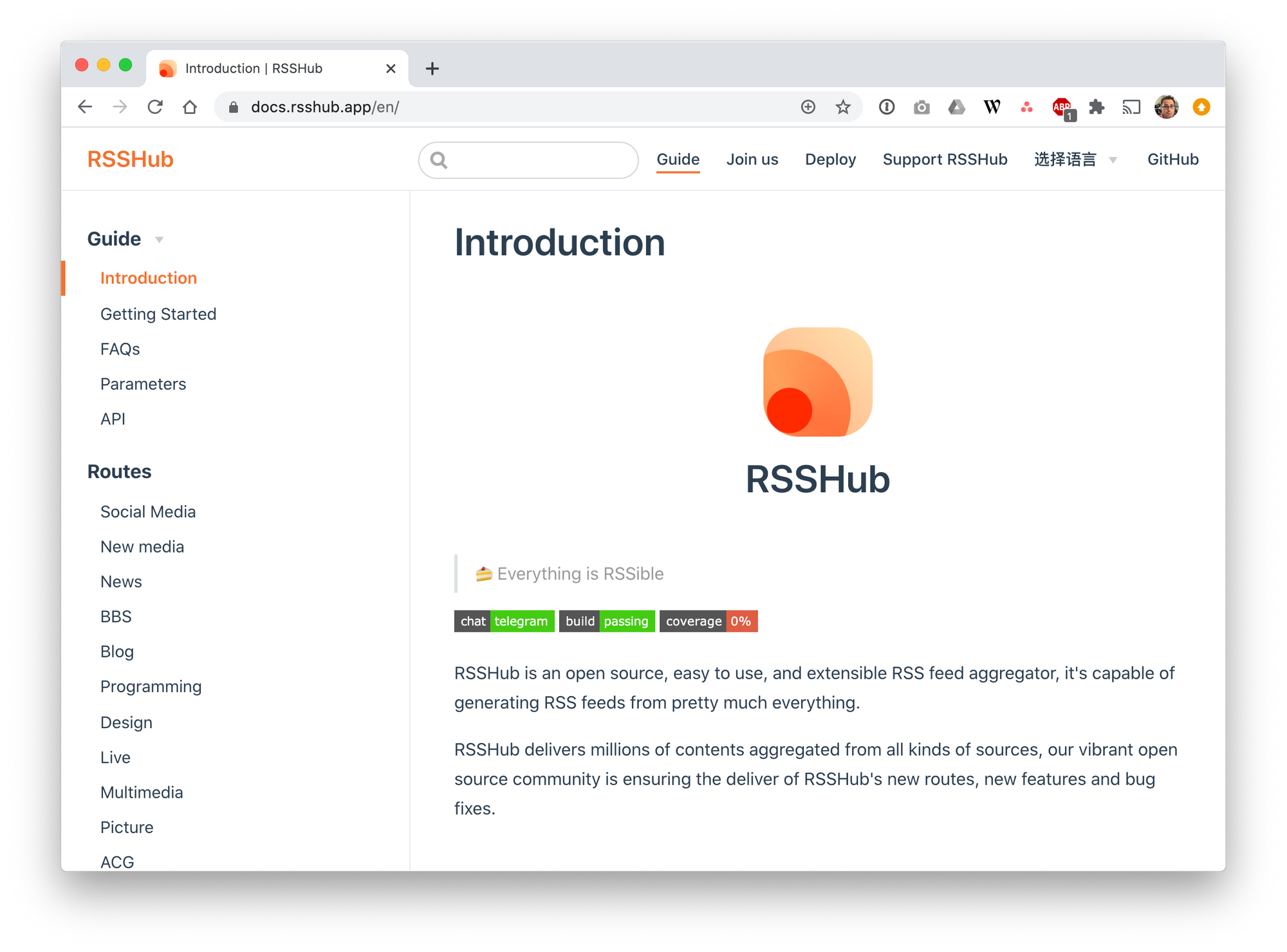

Much better. Ok so under the Getting Started section it shows you how you can use your self-hosted URL to create a feed from another site, for example a Twitter user's timeline. So the Twitter route is setup as /twitter/user/:id where :id is the actual Twitter username. So let's see if that works for me.

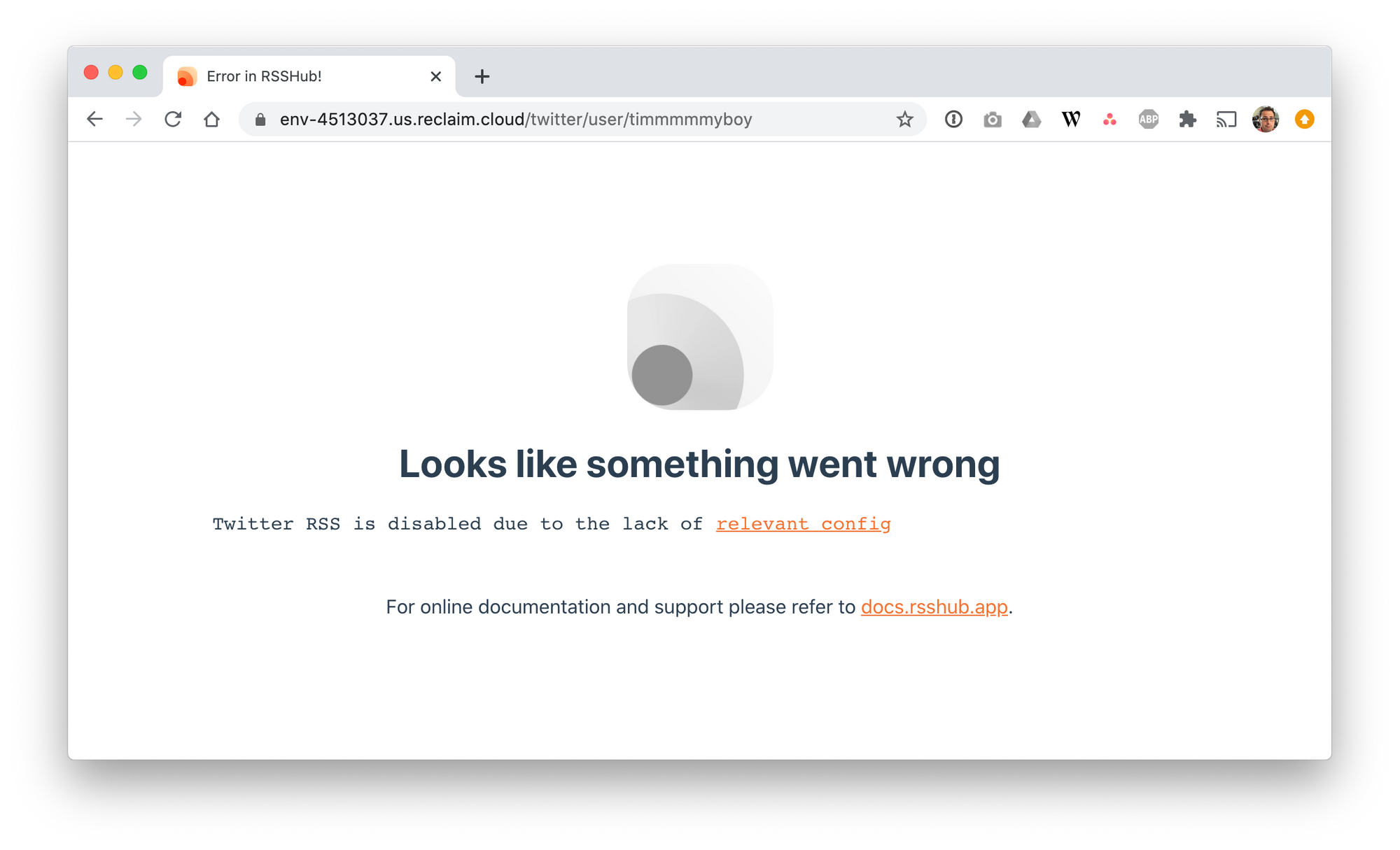

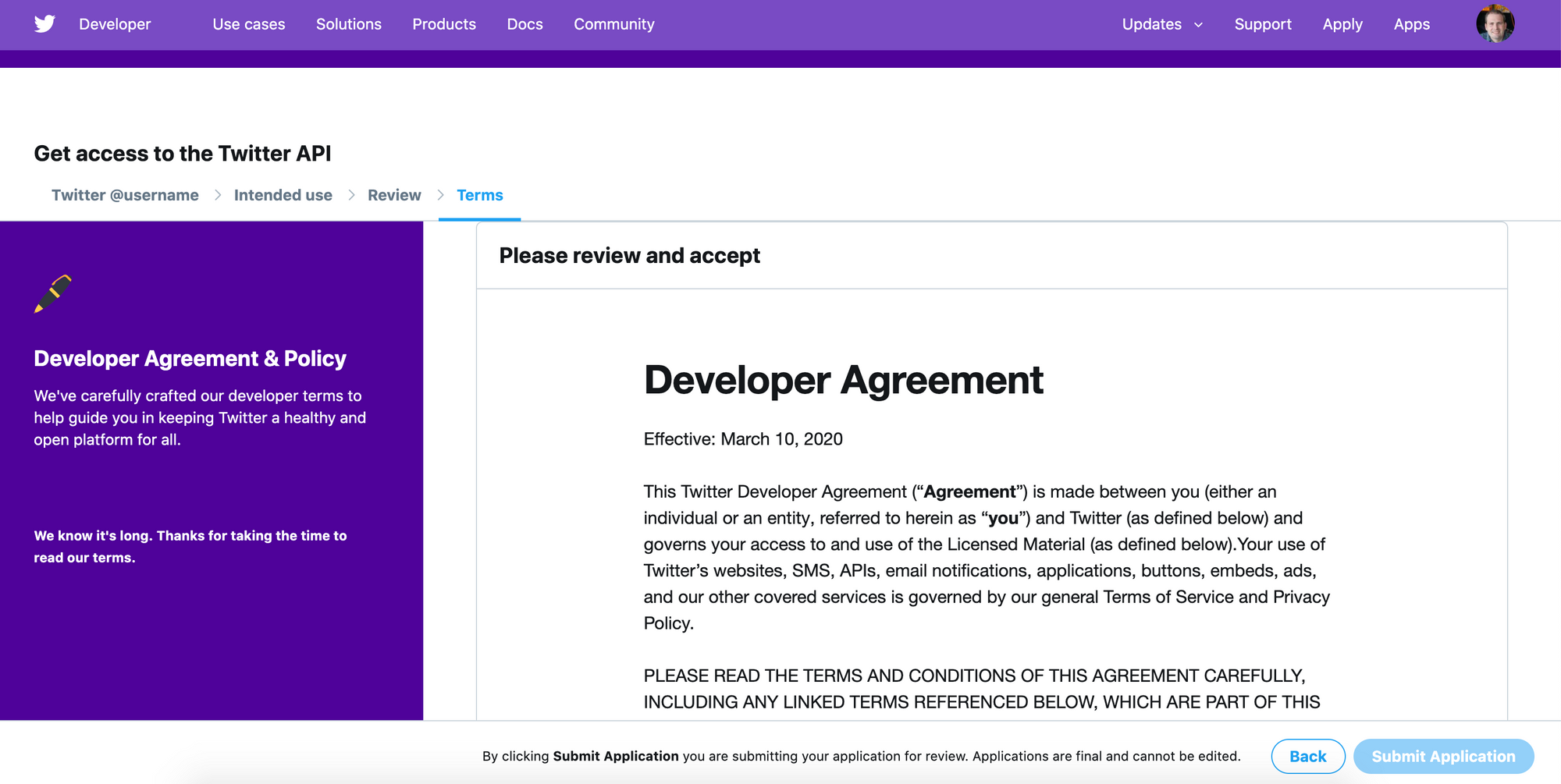

Sigh, I have to config the route first? "relevant config" there just links to the homepage of the docs. Not very helpful. After a bit more digging it looks like some of the routes require API access tokens as environment variables https://docs.rsshub.app/en/install/#configuration-route-specific-configurations. Ok I can do that I suppose, at least for this post :).

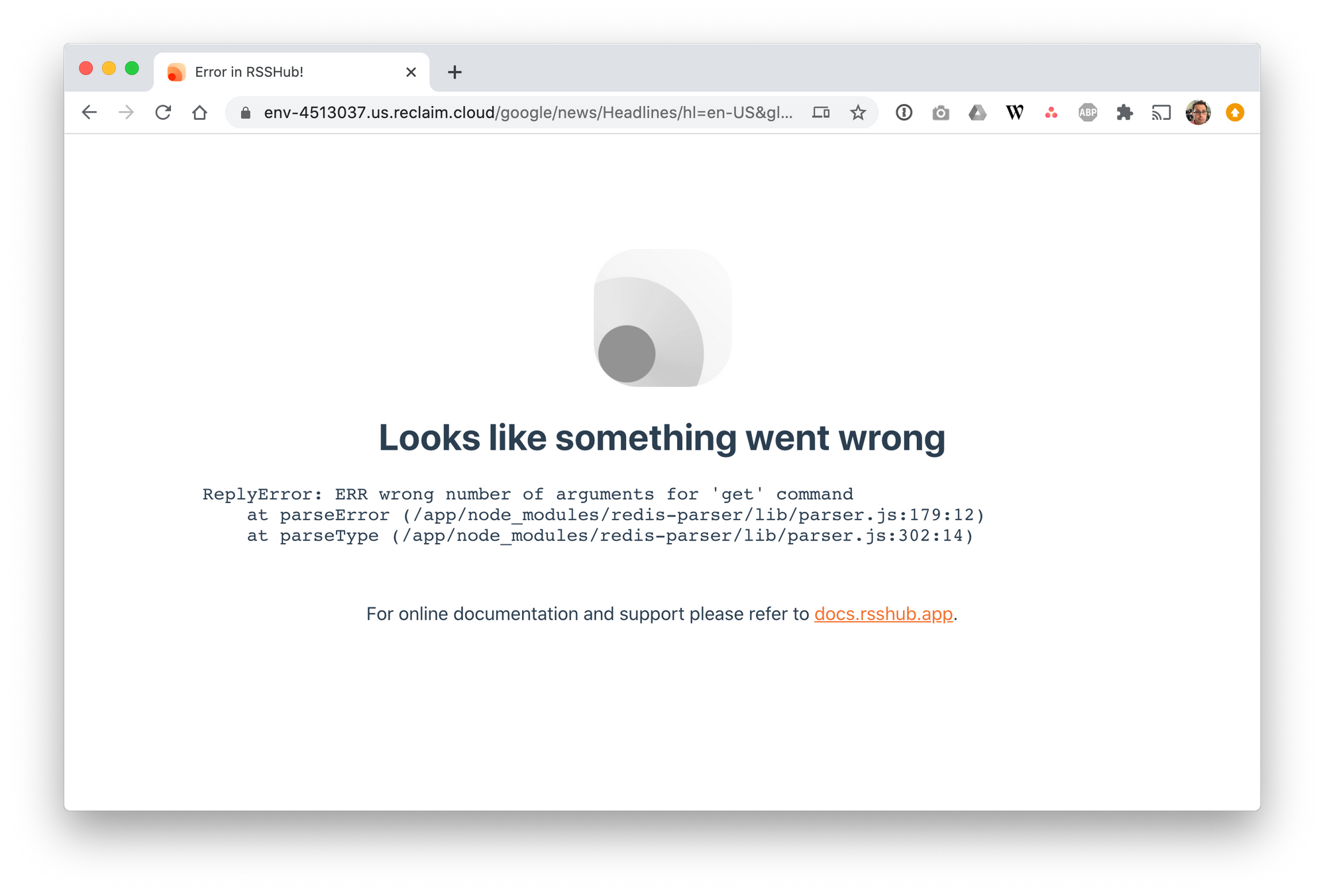

Jesus, seriously? Nevermind, accessing Twitter programatically appears to be as much a dumpster fire as the website itself is. Let's just use Google News as an example, docs for it are at https://docs.rsshub.app/en/new-media.html#google-news-news. So I should be able to load https://env-4513037.us.reclaim.cloud/google/news/Headlines/hl=en-US&gl=US&ceid=US:en and get something.

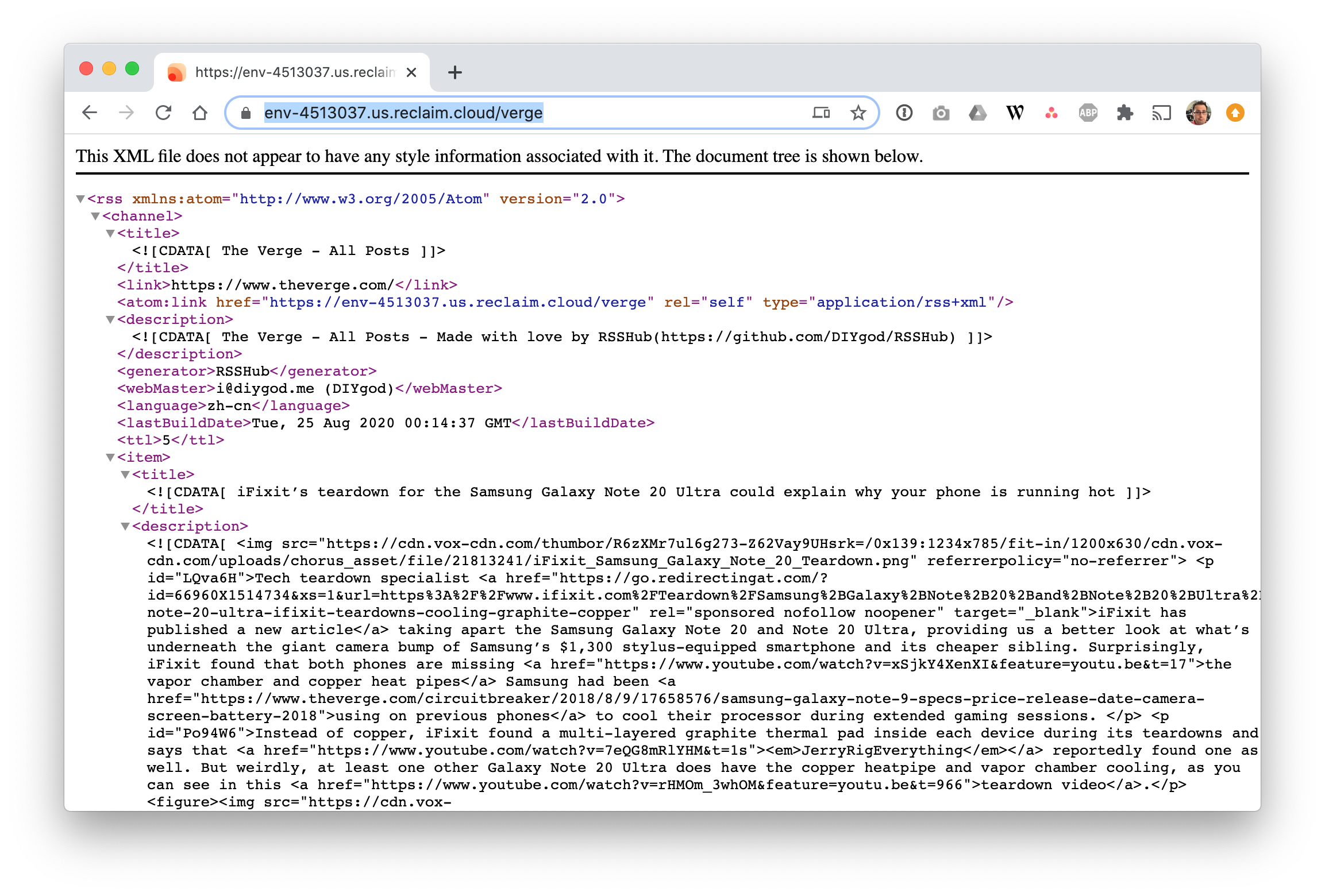

Hmm, more errors. Ok let's try one more route, Verge. The docs say it can grab full-text from the feed as opposed to excerpts only which is interesting. And no api keys or random parameters, just add /verge to the URL.

Heyo! So it does work after all. So in the end RSSHub appears to be in some ways a programattic screen scraper to RSS feed generation tool. Anyone can write a "route" that then allows you with certain URLs to pull in an RSS feed even if the site doesn't offer one (or the feed offered doesn't display what you want it to). Kind of an interesting project but I have to admit I was hoping it was more like a syndication bus or reader or something along those lines. In any case we got there in the end and got it working, so I guess there's something to that.

Comments powered by Talkyard.