Streaming and Recording in the Classroom

Whenever I hear the words "lecture capture" I immediately think of stale videos of someone talking in front of a chalkboard, picture-in-picture with a Powerpoint, about some mundane topic. It's just a term loaded with connotations that reflect negatively on the possibilities of the technology that could be used in powerful ways on our campus. So let's not call it "lecture capture" at least for a moment and talk about some of my initial goals towards offering faculty the ability to broadcast the work happening in their classes to a wider audience. The Incubator Classroom has a lot of hardware that would make this possible and so I've started to build out a scenario of how I think this could work in a way that's flexible and useful for UMW.

Capture

The first step is to be able to get a feed of the various things happening in the class that could be sent somewhere to stream or record. In a typical scenario with systems like this you're going to have 2 feeds, a Video feed that is a camera pointed at the professor, and a Content feed that is the Powerpoint. Again, boring. We have so many other options in the Incubator classroom alone for "video" and "content" that we want the flexibility to offer it all. What that looks like right now is that we have 2 PTZ cameras, one on the rear wall and one up front, that can be positioned and zoomed all through the touchpanel interface. Either of those cameras can be the "Video" feed and you can switch between them. Ideally I think we'd also want a third option of having the output of a professional switcher be the video feed, so I can see a scenario where we bring in the mobile rack with the ATEM Television Studio that Andy has setup and plug it up to classroom rack to do on-demand professional switching with a variety of cameras.

For "Content" we can grab anything that is being projected to either projector as a feed (and switch between the two) on the touchpanel in the same way that we choose the video feeds there. So projector 1 might have a Powerpoint, or a Google Hangout with a remote co-teacher (like in Jim's class) and projector 2 could be a wireless display of what a student is showing off, or a video the class is watching, and anything on the display is automatically in the "Content" feed. For the advanced professional switching scenario mentioned above we can grab HDMI out from all of the different output types directly from the classroom rack and into the switcher.

Record

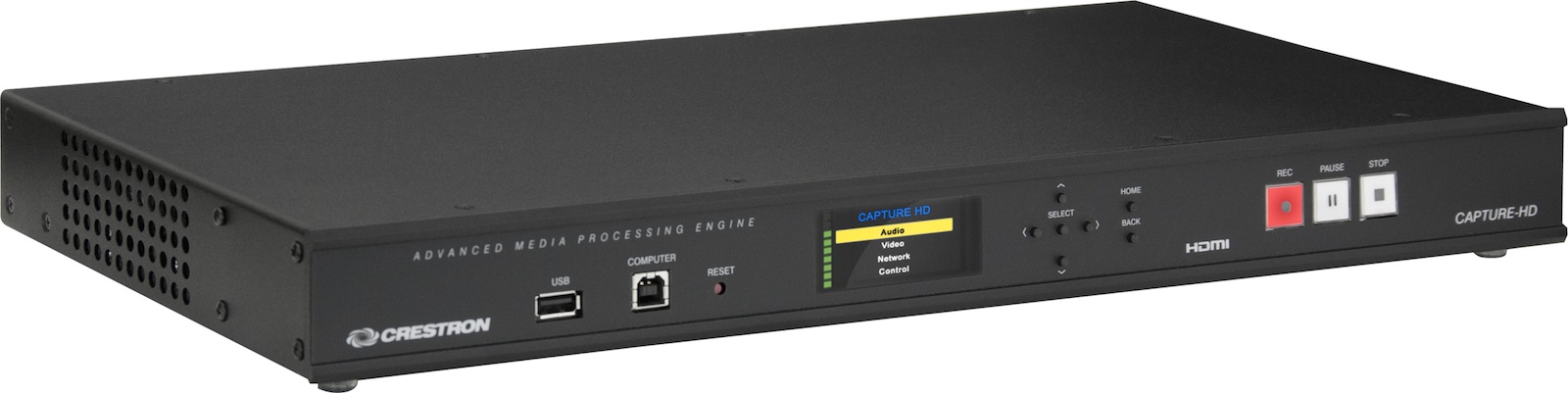

Now that we have our feeds we need to start recording it in some sort of combined format. In our classroom they have put in a Crestron Capture-HD Pro unit for this purpose so that's where I've started in terms of experimenting. The rack unit brings in our video feed via SDI and the Content feed via HDMI (the it supports other formats). Audio is handled for speech (your various Microphones in the room) and content. The device also supports multiple basic layouts with the 2 sources (video and content) like full-screen, picture-in-picture, and side-by-side. A USB flash drive can be plugged into the front or back of the device but a better option we'll probably take advantage of is keeping a decent-sized SDHC card plugged into the back of the unit for storage.

Upload

This is where things start to get interesting and my sense of the full workflow is not fully fleshed out yet (but what better reason than to blog it now?). The next step of the process since we have our recording is to get it online. The Crestron Capture-HD supports FTP uploads to a server (it stores to the recording locally on the SD card and then pushes it up after completion to avoid any network issues causing a loss of the file). We could setup a temporary holding space on the Domain of One's Own web server for the files which I would then want to push up to an Amazon S3 bucket using S3CMD. We'd need to make sure the file was finished uploading before triggering the push to S3. We now have a video in MP4 format stored in the cloud with a public URL. We could trigger an email to an admin at that point with the URL in AWS but I think we can do better.

Convert

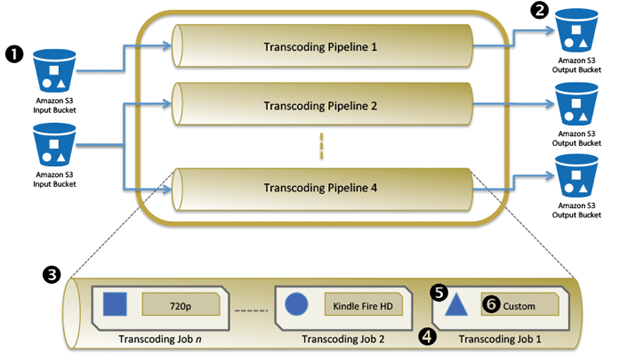

Part of the issue with just handing the URL to a professor is that while most browsers support playing MP4 files at this point, the file is likely to be extremely large for a 1 hour class and the bandwidth necessary to download the video is going to be a burden (as well as an added expense in data transfer from S3). Amazon has a video conversion service called Elastic Transcoder that will take a video from an S3 bucket and convert to a variety of formats and stick the results in different S3 bucket. Andy could guide us on what a good set of sizes/formats would be and we could setup that pipeline and then when videos are sent to S3 a job would get added to convert and drop the conversions in a new bucket. I wonder if an alternative here would be a service like Encoding.com which could use the FTP location as a watch folder to automatically grab and begin encoding jobs (cutting out the extra step previously of moving from FTP to S3).

Embed

The last step is to get the video files into a player that can be embedded. Since we've got multiple formats we'd want the system to detect the available bandwidth and scale up or down based on that. I've started playing with Wowza Media Server to accomplish this and I think it's the perfect solution. Now many of you who have read my stuff in the past know that I've used Wowza extensively for livestreaming content (and that will come up again for livestreaming these courses here later) but I never made much use of the video-on-demand features of Wowza. The biggest reason for that is Wowza was a nightmare to try and manage since it had no GUI interface and all settings were created as XML files on the server. They've made a ton of improvements in their new version and one of the best parts is there's a web-based GUI to setup players for video-on-demand stored locally or externally (and it looks like we can upgrade for free!). I was stoked to see Amazon S3 is indeed an Edge location available to have stored content that is streamed into the player. Wowza can also take the various video files of different quality and switch between them based on available bandwidth so it's a nice option. Once the VOD player is configured to look at the final S3 bucket for files the actual embed would follow a standard format so we can hook into the final action of the Elastic Transcoder finishing a conversion job on a video to shoot an email to someone with the embed code for the video.

I don't have any sense of how long this whole workflow would take but I'd imagine a professor who finished a class recording in the morning would have an email with the embed code by that afternoon or evening (the transfer of video files via FTP and then S3 and then the conversion job are the two big potential timesinks). The Crestron Capture-HD also can create an XML file with metadata about a class if the recording is scheduled through their Fusion RV interface which we apparently our IT department already uses to manage classroom technology. So I'll be working to get access to that and see what the potential is there.

Stream

The other use-case we'd be looking at is the ability to livestream a class or event from a space, potentially at the same time it is being recorded. One nice aspect of the Crestron Capture-HD is that it can send an RTSP stream of what it is grabbing out to a media server and Wowza can take that stream and turn it into a livestream that is embedded anywhere. The embed would again follow a standard format so we'd have an embed code for each stream-enabled classroom that faculty could stick in their course site that would be live anytime someone was streaming from the room. Also remember how we encoded multiple video types for the on-demand recording? Wowza will transcode video on the fly for livestreams automatically so we can be assured that users with low bandwidth don't have to be able to view a high-def stream. The only issue with the streaming scenario is that the Capture-HD box can't record and stream at the same time so the contractors put 2 of these boxes in the rack for the Incubator Classroom. I'm not sure whether that's the way to go for other classrooms. It might be with the upside being that as in our case, a "Start/Stop Stream" button can be integrated right into the touch panel but there might be more cost-effective solutions.

So that's the workflow I sort of have mapped out in my head right now and I've been piecing bits of it together in the classroom to see where it might fall apart. I haven't even started to conceptualize what an integrated space for viewing all captured content might look like. Maybe that's iTunes U, maybe that's Mediacore, maybe that's a custom interface (maybe it's all of the above?). I'd welcome other options or possibilities for us to explore as well. I'm excited at the potential of making it dead simple for us to start capturing more of the awesome stuff happening in our classrooms and share it with a wider audience of people on the web.

Comments powered by Talkyard.