Search and Replace within HTML files

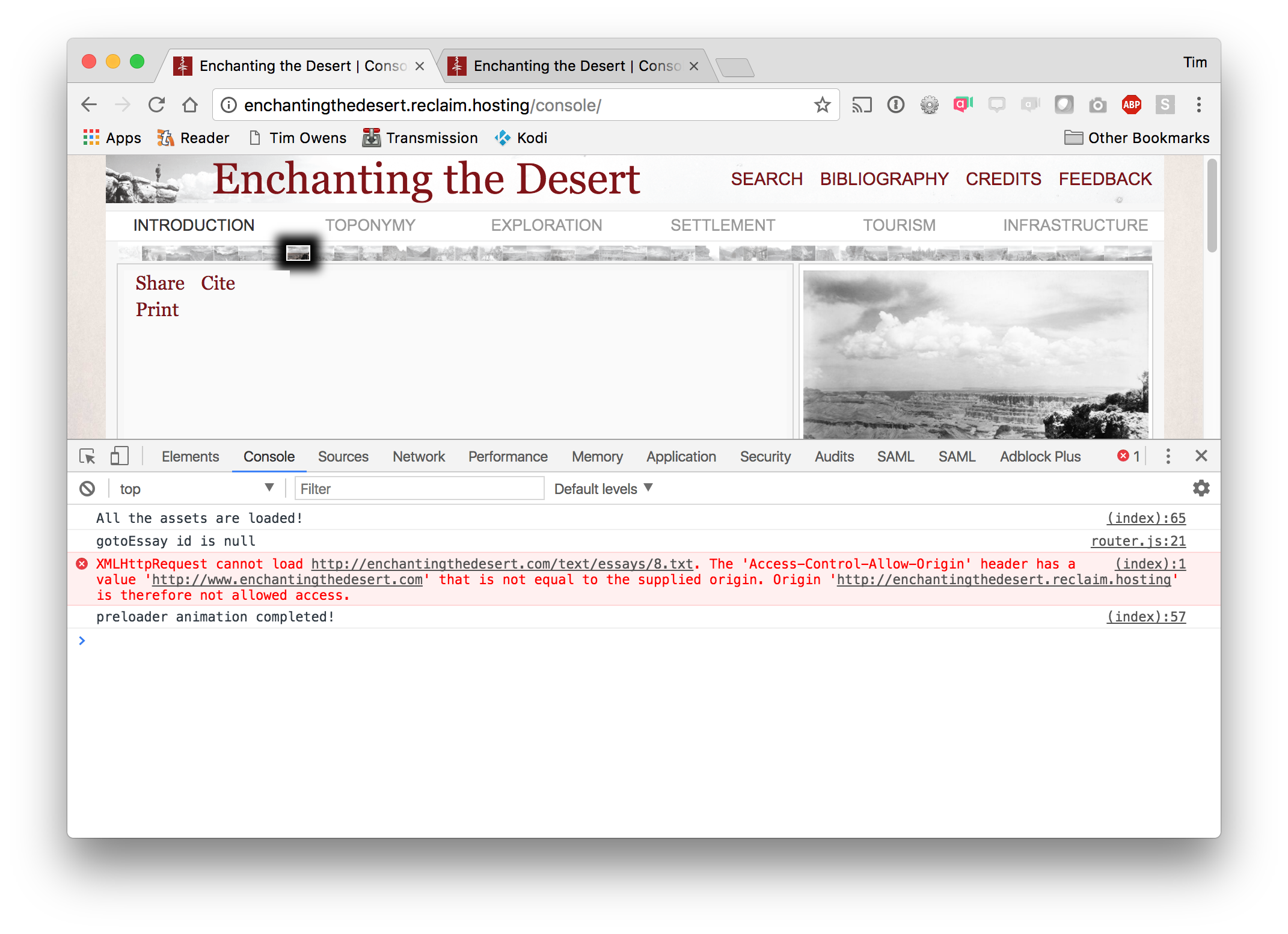

This is a quick writeup of a challenge I ran into yesterday on a support ticket that I figured worth documenting here. Someone was moving an account to a new server and had setup a development URL to test all the files. After uploading them things seemed to work somewhat but some data wasn't loading (for reference this was a site driven by a lot of Javascript in addition to primarily HTML, CSS, and images so no database, no PHP). After taking a look at the console for errors I saw this:

So basically the site is using some AJAX calls to dynamically load content but since the content being referenced is at the old URL and not the development one CORS gets triggered.

I mentioned to the person that once the domain gets moved this wouldn't be an issue but there was no timeline for that happening and they were really hoping to have a fully working version on the development URL to use for now. So how to get all these references updated? There were a lot of files. I'm used to using the grep command to find references within files, but haven't had to search and replace text within a bunch of files before.

Luckily after looking through a few StackExchange articles I found the command I needed:

find . -type f | xargs sed -i 's/enchantingthedesert.com/enchantingthedesert.reclaim.hosting/g'

This is actually two commands, the first find is simple and just says "take every file in every folder" (if I wanted to narrow down by filetype I could have done so here but I want it to look in everything), then the pipe | routes all those files to our second command sed which does the heavy lifting of searching for a string and replacing it. In this example enchantingthedesert.com was the old URL in the code and I was replacing it with enchantingthedesert.reclaim.hosting.

One command and the site started working perfectly with no more issues.